Why You Should Use Intelligent Reporting and Skip the Templates

When it comes to creating automated content, using a heavily templated approach is very enticing. It allows you to quickly set up new content and make sure that the final narratives very closely follow the outline that you set up. I call this a ‘Mad Libs’ approach, since it follows the same basic structure of the kids game, where the blank words in templates are filled in with specific details. Many companies in the Natural Language Generation space have made use of this approach, often thinly papering over their templates by adding a few basic options or paraphrases.

Having watched this industry for over ten years, I’ve seen how a huge percentage of these projects (and the companies connected to them) have failed. So often, it is because template-based approaches have hidden problems that are not apparent to non-experts at first glance.

Before diving into those hidden problems, let’s first establish the alternative to templates- intelligent narratives. Rather than using templates, these narratives are built by: (1) going through data to figure out what the most interesting stories are, and then (2) allowing the most interesting stories to self-assemble into a well-structured narrative. This has the huge advantage of ensuring that all the most compelling content finds its way into the final narrative. While templates wedge the data into the narrative, intelligent reporting builds the narrative around the data.

Sounds way better, right? But then why do some companies try the templated approach? That’s where template’s ‘hidden’ problems come into play. When comparing a single templated report with an intelligent narrative, the template will often not look that much different. In particular, the templated report will seem to do a good job hitting on the ‘main’ points (what was up, down, etc.).

The real problems happen over time, as people read more versions of the templated content and realize they are all fundamentally the same. This causes readers to (1) worry that they aren’t getting the full story, and (2) get tired of having to read through words just to get to the same pieces of data.

Narratives Are Not Predictable

If you have a big data set, the number of possible interesting stories you could write about that data is nearly endless. Which stories will wind up being the most interesting (the ones you will include in your narrative) cannot possibly be known in advance.

Take a football game, for example. Sure, the final score and the teams’ records are pretty much always going to be relevant, but beyond that, it’s chaos. You might have a game where there was a huge comeback, so the flow of scoring over the course of time is the key story. In another game, it was a blowout from the start and the key story is about a player’s stellar statistics. Or, the key story could be the revenge factor a team has after having been eliminated from the playoffs by their opponent in the previous year. I could go on, but you get the point- there is no way to write a template for all these possibilities.

Essentially, templates have to be built to talk about the types of things that always occur. One team wins; one team loses; the winning team’s record is now X; the losing team’s record is now Y; etc. However, it’s the things that rarely happen that are actually the most interesting to the reader. This goes for sports, sales figures, stock movements, you name it. Templates are therefore ironically built to show the exact information that is the least compelling to the reader.

There's A Reason We Don't Like 'Robotic' Writing

On top of doubting that they are getting ‘the real story’, readers having to repeatedly slog through the same information in the same arrangement over and over again will soon be begging to just see the data! This is because the words in a templated report are not actually adding any real information compared to the way they are in a flexible, intelligent narrative.

The idea that words are the key to helping people understand information stems from a fundamental misunderstanding of where the power of narratives comes from. There are two main advantages of a narrative when compared to raw numbers: (1) the ability to include or exclude certain information, and (2) the ability to arrange that information into main points, counter-points, and context. Wrapping words around the exact same set of data points in every report will not realize either of these advantages.

Fundamentally, good reporting is about synthesis, not language. In fact, intelligent reports can use very little language (as in infographics) and still convey a great deal of easy-to-digest information. Without intelligent synthesis, you are better off just giving readers the key pieces of data and letting them piece together the stories themselves.

[Quick note: both this problem and the problem of missing key information are most applicable to situations where end users are reading multiple reports. It is possible you could have a use case where people are only going to read the templated report once. In my experience, however, that circumstance is rare due to the amount of work that needs to be done to set up automated reporting. You typically have to merge your data into the automated reporting system, set up the reports (which take a decent amount of time even if you are using a template), and then set up a distribution system. It’s rare for this procedure to pencil out in use cases that don’t involve readers encountering multiple reports, either because they are getting multiple reports over time (e.g. a weekly recap) or seeing reports on different subjects (e.g. reports on different sales team members).]

Starting Over Next Time

Given the large initial investment in data integration, companies are often interested in applying their automated reporting capability to new, related use cases. In this very likely circumstance, you are much better off having built out your content with flexible intelligence rather than templates. Let’s examine why that is by looking at two different scenarios.

In Scenario #1, you’ve invested in building out an intelligent Generative AI system that synthesizes your data and turns it into compelling, insightful reports. In Scenario #2, you took a shortcut and built out a template-based reporting system. The good news is that in both scenarios you will be able to quickly adapt your narrative generation technology to your new use case.

The bad news, if you are in Scenario #2, is that your new reporting will have all the drawbacks that are inherent to a Mad Libs approach, since you will simply be building a brand new template from scratch. If you built an intelligent system, however, you would be able to apply the already-built intelligent components to the new use case. This reshuffling typically takes the same amount of time that it would take to build a template. Essentially, by building intelligence instead of templates, you can quickly expand quality content at the same rate that you can expand cookie-cutter templates.

In Conclusion

Investing in quality content is going to cost more than a templated approach, and the benefits will not be obvious at the beginning. Over time, however, templates provide little to no value, while intelligent reporting will prove its worth. Trust me on this one: leave the Mad Libs to the kids.

Ok, first things first- pivot tables most certainly DO WORK…at some things. This article is not about why pivot tables are useless, but rather about the ways that pivot tables fall short of solving the data analysis needs for many companies and use cases. I also explain the fundamental reasons WHY they fall short. I focus on pivot tables because they are probably the best tools that currently exist for most companies to run data analysis. If pivot tables can’t help you with your data analysis, then it’s probably the case that no software tools can (that you know of 😉).

How Pivot Tables Can Help

Pivot tables are great at quickly surfacing the most important top-line numbers in your data. Let’s use, as an example, a retail company that has a record of every single sale they've made. They could store each sale as a row in a spreadsheet, showing the date of the sale, price, location, and a product class.

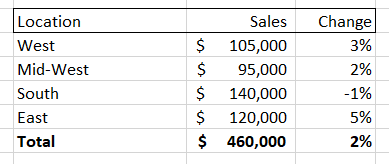

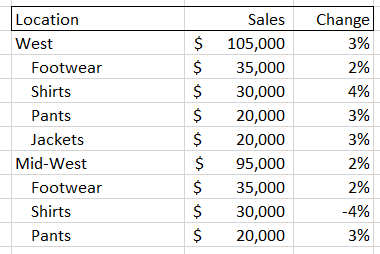

If the company had a large number of sales then the spreadsheet would quickly get unwieldy. Summing up the total sales could be helpful, but what if you wanted to dive into a particular aspect of this sales data? A pivot table gives you that ability, allowing you to, for instance, isolate sales for a given month and then break down those sales by location. It might look something like this:

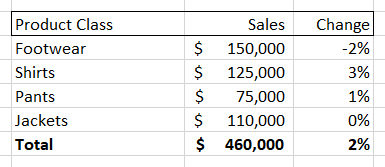

You could also choose to break down the sales numbers by product class, giving you something like this:

Pivot tables also allow you to change time periods, add new columns (like net profit, discount percent, etc.) and also easily turn these tables into charts and graphs. However, while a pivot table allows you to very easily see and visualize numbers, it only allows you to see that information along the dimension of your pivot table. So, if you are looking at the ‘location’ breakdown you can see the changes along that dimension. Same for the ‘product class’ breakdown.

But what if the interesting information that you need is at the intersection of two different dimensions? For example, both the “Midwest” and “Shirts” could individually have the numbers shown above, while sales of “Shirts in the Midwest” are down significantly. You would not be able to see this by looking at either of these pivot tables.

A quick-witted data analyst at this point will point to a flaw in my complaint- if the interesting movements in my example data are located at the intersection of the ‘location’ and ‘product class’ data, then a user could select both of those dimensions to create a new, more detailed pivot table. Something that looks like this:

But there are two problems with this solution. First, you have to proactively find the right set of intersections. As we will soon see, the number of possible combinations for most data sets is gigantic, so it may be difficult to find the key information if you don’t have significant time and/or expertise. Second, as you increase the amount of information in your pivot tables it becomes harder to actually digest it. In the examples above, we went from a very easy-to-read set of four items plus a total value, to a much harder to digest set of 16 sub-items, 4 items, and a total value. This could easily get out of control if we added a third dimension, and that’s before adding more metrics or KPIs as columns and/or having larger numbers of sub-components for each dimension.

It's More than Just Subjects

Hopefully you have a sense of how difficult it can be for pivot tables to deal with all the different ways that subjects and groups can be broken down. Unfortunately, that is only one small problem in the world of data analysis. There are five big dimensions to data analysis. Critically, each of these components is independent of each other, meaning that pivot tables, which start to get unwieldy when handling three dimensions of interaction, are utterly hopeless in helping users to understand interesting aspects of their data that might involve seven or more dimensions of interaction. The five main classes of dimensions (which each can contain many sub-dimensions) are:

Subject Groupings

The retail grouping example I used above is really an aspect of two different dimensions of analysis – parent grouping and children grouping. When examining data from a parent group perspective we look to see how it compares to other subjects in the same group (e.g. Midwest vs. East) or compare data to the average among all siblings (e.g. Midwest vs. All Locations). When looking at children groupings, we are looking to see which sub-components are driving the overall figures. As noted in our pivot table example above, this might involve diving into multiple dimensions (e.g. 'location' + 'product class') to determine the most relevant subject.

Timing

There are different ways to create data points using time periods (sales for the day, week, month, etc.). There are also different ways to contextualize data over time periods: we might look at how a metric has changed over time, whether it has trended up or down over a certain time period, or how it compares MTD to a similar period in the past. Both the way we create data and the way we compare it are independent dimensions (e.g. you can look at how data from this month [data creation period] compares to data from the same month last year [data comparison period]).

Metrics

These are the actual figures you care about in your data, such as ‘total dollar sales’, ‘units’, ‘average price’, etc. There is some conceptual similarity between dealing with a group of metrics and dealing with a group of subjects. The key difference is that groups of subjects combine to form their parent (such as the sales for all ‘locations’ adding up to the total sales) whereas metrics are different aspects of the same subject. Pivot tables do a reasonably good job of handling this dimension, as you can typically express different metrics using columns instead of rows. Adding more than a few columns, however, can quickly overwhelm the end user, which is a problem because end users often have a large number of metrics that they potentially care about. The use of metrics is also complicated by the fact that many metrics have different aspects that function like new dimensions of analysis. For instance, you might have a total sales figure, but then there is also the change of that figure over time.

Events

These are the different types of ‘stories’ contained within your data that end users are concerned with. Stories like ‘X metric is trending up’ or ‘Y metric is now above 0 for the first time since Z date.’ Most end users have a list of hundreds of events they care about. Pivot tables typically do not even make an attempt to handle this aspect of analysis, leaving it up to the user to deduce these events from looking at the numbers.

Importance

This dimension tries to break down data by what is actually the most valuable to the end user, which requires it to sit at the intersection of all the above dimensions. It needs to weigh the inherent interest level of a particular subject, each metric of that subject, each possible time period to analyze that metric over, and each event that metric could be involved in. On top of that, this dimension incorporates other elements, such as the volume of a given subject (compared to its sibling metrics) and whether a particular event is relevant given previous reporting.

Just how crazy difficult it is to navigate each of these dimensions while running data analysis is obscured by the brilliant human brain. Humans have the ability to map layers of meaning on top of each other and simultaneously calculate across multiple dimensions of analysis. We can then synthesize the most important information found at the intersection of all the dimensions listed above- either creating a narrative, giving a presentation, or creating a set of key charts and tables. Unfortunately, this process for human beings requires expertise and intuition as they wander down pathways in the data to find those nuggets of information. It also takes a great deal of time, costs a lot of money, and can never be as thorough as a computer. Pivot tables are really just a partial shortcut- allowing data analysts to skip a couple of dimensions of analysis but still requiring them to brute-force the rest.

Time to Pivot from Pivot Tables

What if a computer could handle this task? You would then get the best of both worlds. Like a human being, it could flexibly run through multiple, independent dimensions of analysis and then synthesize its findings in a way that was easy to understand. Being a computer, it could also analyze information much more thoroughly, run its analysis very quickly, and be able to produce reports at incredible scale. infoSentience has actually created technology that can accomplish this. In brief, the key technology breakthrough is to (1) use conceptual automata designed to run an analysis for a particular dimension, (2) allow each automata to run independently, and (3) give them the intelligence to interact with the other conceptual automata so that they can come together to form a narrative. Keep following this space for more information on just how far reaching this breakthrough will be.

infoSentience's automated content today is the worst you will ever see it. That will be true if you are reading this article on the day I published it, and will also be true if you are reading it a year later. Why is that? Simple- every day, our content is doing one of two things: (1) staying the same, or (2) getting better. The ‘staying the same’ part is pretty straightforward, as our software will never get tired, make mistakes, need retraining, decide to change jobs, etc. On the other hand, we are constantly making improvements, and each of those improvements establishes a new ‘floor’ that will only get better. I call this the ‘improvement ratchet’ since it only moves in one direction- up! There are three key ways that automated content gets better over time:

- Improving Writing Quality

- Expanding the Content

- Better Audience Targeting

Improving Writing Quality

The most straightforward way that our automated content improves is by teaching our software how to write better for any particular use case. One way we learn is through feedback from our clients, as they see the written results and suggest changes or additional storylines. Another set of improvements comes from the iterative dance between the system output and our narrative engineers. When you give software the freedom and intelligence to mix content in new ways you sometimes come across a combination of information that you didn’t fully anticipate. For example, let’s look at this paragraph:

Syracuse has dominated St. Johns (winning 13 out of the last 17 contests) but we’ll soon see if history repeats itself. Syracuse and St. Johns will face off at Key Arena this Sunday at 7:00pm EST. St. Johns has had the upper hand against Syracuse recently, having won their last three games against the Orange.

This paragraph works reasonably well, but the specific combination of the first and third stories aren’t tied together as well as they could be. In this case, the final sentence (about St. Johns dominating recently) would be improved by incorporating the information from the first sentence (about Syracuse having a big advantage overall). The updated version of the last sentence would read like this:

Despite Syracuse’s dominance overall, St. Johns has had the upper hand recently, having won their last three games against the Orange.

This improvement is an example of what we call an ‘Easter Egg’, where we add written intelligence that is targeted to an idiosyncratic combination of events. Our reports contain hundreds of possible events adding up to millions of possibilities. Adding intelligence to these events allows them to combine together properly and avoid repetition. However, there’s no way to build out specific language for all possible interesting combinations in advance. As we read actual examples we come across unique, interesting combinations. We can then add specific writer intelligence that covers these combinations to really make the reporting ‘pop’.

Critically, this intelligence is usually a bit broader than just a simple phrase that appears in only one exact combination of events. In the example above, for instance, we would add intelligence that looks for the contrast between a team’s overall record against an opponent and their recent record and allow that intelligence to work in any such situation. We also need to make good use of our repetition system to make sure that all these Easter Eggs don’t start tripping over themselves by repeating information that was already referenced in the article.

Expanding the Content

Another way that content improves is by quickly expanding into similar use cases. For example, when we started with CBS we only provided weekly recaps for their fantasy baseball and football players. We soon expanded to offering more fantasy content: weekly previews, draft reports, year-end recaps, and more. Because those were successful, they then asked us to provide previews and recaps of real-life football and basketball games. We quickly added soccer, and then expanded the range of content by also providing gambling-focused articles for each of those games.

This same story has played out with many of our other clients. One of the big reasons for this is because automated content is so new that it’s often difficult to grasp just how many use cases it has. After seeing it in action, it’s much easier to imagine how it can help with new reporting tasks.

The other big reason that automated content often quickly expands is because the subsequent use cases are often cheaper to roll out due to economies of scale. There are three main steps to generating automated content:

- Gather data

- Create the content

- Deliver it

Each of these steps is usually much easier when rolling out follow-up content. In the case of Step #1, gathering data, it is sometimes the case that literally the exact same data can be used to generate new content. This happened when we expanded from general previews to gambling-focused previews for live sports games, which just emphasized different aspects of the data we were already pulling from CBS. Even if there are additional data streams to set up, it’s usually the case that we can still make use of the original data downloads as well, which typically reduces the amount of set up that needs to take place.

When it comes to Step #2, creating the content, infoSentience’s ‘concept based’ approach pays big dividends. Instead of creating Mad-Lib style templates, infoSentience imparts actual intelligence into its system. That allows the system to be flexible in how it identifies and writes about the most important information in a data set. It also means that it can quickly pivot with regard to things like: the subjects it writes about, the time periods it covers, the length of the articles, the way it adds visualizations, the format of the report, the importance of certain metrics and storylines, and many more. Entire new pieces of content can often be created just by turning an internal ‘dial’ to a new setting.

Finally, for Step #3, there is usually a tremendous amount of overlap when it comes to the delivery process for follow-up content. Typically, we will coordinate closely with our clients to set up an initial system for delivery. This might entail dropping our content into an API ‘box’ that our clients then access, but other times we send out emails ourselves or set up a web site to host the content. We might also set up a timing system to deliver content on demand or at particular intervals. It is often the case that these exact same procedures can be used for follow-up content.

A great example of how all these steps came together is when we expanded to providing soccer content for CBS. In that case, the data pulldown and delivery procedures were identical, requiring no changes at all from CBS. While we did create some soccer-specific content, much of the sports intelligence for soccer was able to make use of the existing sports intelligence we had built into the system.

Better Audience Targeting

Finally, another way that automated content improves is from user feedback. Automated content allows for a level of A/B testing that would be impossible using any other method. I’ve already mentioned that our AI can change what it focuses on, its time periods, length, format, and more. It can also use different phrase options when talking about the same information, and even change the ‘tone’ that it uses. All of these options can be randomized (within bounds) when delivering content on a mass scale. It is a simple task to then cross-check user engagement with each of these variables to determine what the optimal settings are.

It's also possible to allow individual readers to customize their content however they want it. All of the ‘options’ mentioned above can be exposed to end users, allowing them to specify exactly what they want to see. This not only allows users themselves to improve the content they see, but also gives organizations a better understanding of the information that each of their customers really care about.

Conclusion

So much of our time in business and life is spent in a losing fight against entropy. Automated content provides a welcome break from that struggle. Set it up and enjoy great benefits from day one, knowing that the only changes that will ever take place are for the better.

Although Generative AI is making headlines every day, it is still a new concept to most people. Furthermore, Data Generative AI (DGA) is even less well known, so many of the people I talk to struggle to understand when it makes sense to make use of it. In this post, I’ll give a quick synopsis of what DGA is, then walk through some requirements you’ll need to have in order to implement it.

What is Data Generative AI?

Many people have recently become aware of the power of generative AI by looking at Chat-GPT. This is a system that has been trained using large language sets and can create written responses to written queries. DGA, in contrast, creates written reports out of large data sets. It analyzes the data, figures out what is most important/interesting and writes out its analysis in an easy-to-read narrative. In essence, Chat-GPT and other Large Language Models (LLMs) go from language 🡪 language, while DGA systems go from data 🡪 language.

In theory, this opens up all data sets to automatic AI analysis and reporting. In practice, there are technical and economic reasons that the current use of DGA can only be applied to certain kinds of data sets.

The five things you’ll need to make use of DGA are:

- Accessible Data

- Structured Data

- Complicated Input

- Complicated Output

- Significant Scale

Accessible Data

The first step that a DGA system takes when building a report is to ingest data. Since one of the main goals of using DGA is to automate reporting processes, it figures that the process of ingesting data has to be completely automatic as well. This means that you’ll need to have some way of automatically delivering your data to a DGA system. Typically, this means setting up an API with a private authentication key to allow the DGA to pull down data. However, there are other methods as well, such as sharing a flat file or giving access to an online spreadsheet such as Google Sheets or Office 365. If security is a concern, it could even be possible to build the DGA system using a sample data set and then transfer the program onto your servers so it could run without the data ever leaving your organization.

The good news is that while you might need to create a new access point for your data, you will rarely need to change the format or structure of how your data is stored. This is because all of the data coming into a DGA system needs to be manually placed into a conceptual hierarchy. This process takes the same amount of time whether you have one API with all of your information in JSON format, or 10 different data silos with a mix of formats. In fact, one of the great benefits of DGA is the ability to pull in information from anywhere and make it all understandable and accessible.

Structured Data

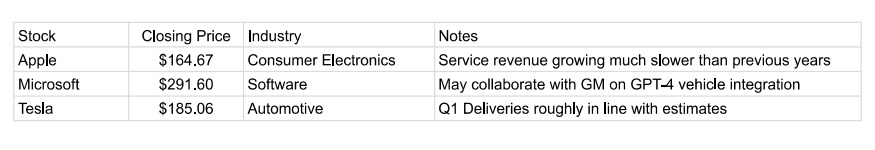

Accessing the data is only part of the equation, however. The other key requirement is that the critical data you want to analyze is ‘structured’ data. Determining what counts as ‘structured’ data is a bit tricky, so let’s go over an example. Here is a simple spreadsheet of stock data:

In this example, “Closing Price” is a perfectly structured piece of data. It has a clearly definable metric (Closing Price) that is represented by a number. This makes it really easy for DGA systems to work with it. Slightly less structured is the data in the “Industry” column. In this case, you have a text value that corresponds to all possible industries. Whether a variable like this would be considered structured or not typically comes down to: (1) how it will be used, and (2) how many different text options exist. If it is just as simple as having the system assign an ‘industry’ value to each company and group it with other companies that have that same industry, then this can be pretty easy. But imagine if there were 100 different industry types. In that case, you might want to assign each company a higher-level organizational value (like a ‘sector’) that comprises many industries. This might need to be set up manually as part of the data ingestion process. Furthermore, if you have many possible text values it’s likely that some of them might need spelling or grammar intelligence attached to them (such as whether they are singular or plural).

Looking at the "Name" column, we see an example of text-based information that is minimally structured for data purposes, but can be effectively used for labeling. You can’t use this text to derive substantive information about the company, but you can simply insert it into the narrative. Again, there might be complications with data like this because of grammatical issues.

Finally, we get to the ‘Notes’ column. Sentence and paragraph length text is the least structured data. Of course, the ability of AI to structure text is improving all the time. Things like sentiment analysis or word clouds can be effective ways to structure that data and integrate it into the narrative. In general, though, DGAI is called Data Generative AI for a reason, and so if any critical information is found in long-form text it is probably not a great candidate to get automated.

Complicated Input

You are generally going to need a lot of data to justify setting up a DGA system for the simple reason that if you don’t have a lot of information then you probably don’t need an automated system to analyze and synthesize it. Essentially, DGA is great at solving data-overwhelm, but that means you need an overwhelming amount of data.

Most of you reading this have probably already skipped to the next section, saying something along the lines of “yeah, having too little data is not my problem.” But, for those of you unsure if you have enough (and to get the wheels turning for the rest of you) it’s good to think about just how many different ways there are to have an ‘overwhelming’ amount of data.

Having a long sequence of data over time can easily make for a difficult analysis, particularly if placing the values of the current time period (such as this month’s sales data) into historic context is important. Another way that complexity can occur is due to the interaction of groups. For instance, imagine a single retail sale that occurs. That sale occurred in a particular location (that might have many levels- city, state, country, etc.), for a particular product (that might have a color, style, category, department, etc.), and sold to a particular person (that might have demographic information, sales channel info, marketing tracking info, etc.). All of these individual pieces of information might have interesting interactions, and the number of possible combinations can add up quickly.

Complicated Output

While you might have a lot of data, there is also the question of what type of reporting you need to derive from that data? It’s possible to have a tremendous amount of data that can be crunched down to just a few key metrics. In that case, you need algorithms that look through the data and crunch the numbers, but you don’t need DGA. DGA is used for synthesis, in situations where there are lots of things that are potentially interesting, but you want a story about just the most important ones.

Let’s illustrate this point using a football game. When the Dallas Cowboys play the New York Giants, a tremendous amount of data is generated. There is the play-by-play of the game, the total stats for each team, the total stats for each player, the history of the stats for each team and player, the upcoming schedule, the previous matchups between the two teams, and many more! Clearly, this amount of data satisfies the ‘complicated input’ requirement.

But let’s say that you only care about one thing- ‘who won the game?’. In that case, you don’t need a synthesis from DGA, since even though there was a huge number of things that happened, they can all be boiled down to the final score. But what if your question is ‘what happened in the game?’ In that case, you need DGA to parachute in and examine thousands of possible storylines and organize and write up just the most important ones. DGA works great in situations where thousands of things could happen, hundreds of things did happen, but you just want to read about the 10-15 most important events.

This example is also a good illustration of the importance of a narrative in telling a story. Modern dashboards are usually the equivalent of a ‘box score’ for a sporting event. It’s certainly interesting sometimes to go through a box score, but most people would much rather read a story about what happened in the game than read through the numbers and try to figure out what happened on their own.

Scale

DGA has to ingest data from your unique data sources, organize it into a conceptual hierarchy, create compelling content from it, and then format and deliver that content to particular end points. Unfortunately, all this takes a decent amount of up-front effort. That means that DGA doesn’t make a lot of sense unless you have a lot of reports you need built. Exactly how many reports depends on the value of each one, but it would typically be at least 1,000 over the course of a year (numbers of 1,000,000+ per year are not uncommon).

Often, people will ask me about automating a particularly onerous report within their organization that someone on their team has to put together every month. Unfortunately, if a report takes half of one employee’s time, that usually means that: (1) we’re not providing much value if we automate it, and (2) that report is really long and complicated, making it extra expensive to build out.

Instead, think about using DGA when you:

- Need a unique report to go out to a lot of individual readers

- Have many subjects within your data that you want separate reports on

- You want reports on your data to be continually updated

Or some combination of the three

You Can Do It!

Hopefully this guide gives you a good sense of exactly what you need to be a good candidate to apply DGA to your data sets. If your use case satisfies all five requirements then you can bask in the power of being able to create beautiful, insightful reports as often and at whatever scale you choose.

There are lots of tricky problems when it comes to generating high-quality automated reports, and repetition is one of the toughest. Repetition is a difficult problem for automated writing systems.

Do those sentences read well together? I’m guessing you probably think ‘no’. They seem pretty clearly repetitive, but that’s only obvious from a human perspective. From a computer’s perspective, however, it’s not so clear.

Why that is and how we can try to get around that will be the subject of this post. This one will (hopefully) be part of a series of posts where I go into a bit more detail about the technical challenges that underlie high-quality data-focused generative AI. A lot of these things are problems, like repetition, that are hard to even notice if you haven’t spent time in the AI trenches, as we don’t think twice about them as humans.

First, why is repetition even an issue in the first place? If you build automated reports using templates, it isn’t. That’s because you know exactly what stories are going to appear at each point in a narrative, so you can use the awesome repetition fighting powers of your human brain to make sure that the template avoids any repetition.

Using a template is severely restricting, however, because the template can’t flexibly adapt to the underlying data, and therefore can’t possibly report on the most important information that the reader needs to see. The best way to set up an automated report is to allow the system to individually identify each event within the data set and then build a narrative out of only the best parts.

However, once you’ve freed the software from templates and given it flexibility in how it arranges information, you’ve also summoned the Kraken that is repetition. To understand how tricky that problem can be, let’s paraphrase the pair of sentences that started this post:

There are lots of high-scoring wide receivers in the NFC, and DeAndre Hopkins is one of the best. DeAndre Hopkins is a good fantasy wide receiver.

We’d say this is repetitive because of the double mention of DeAndre Hopkins being a good receiver, but let’s look at it from a computer’s perspective. The first sentence is actually made of two parts: (1) identifying that there are many high-scoring WRs in the NFC, and (2) saying DeAndre Hopkins is good. The second sentence is just about DeAndre Hopkins being good. For software, these two sentences are not the same, since the first has two components and the second sentence has just one. Ah, you say, but what if we give software the ability to recognize each of the two subcomponents of the first sentence so that it can understand that it conflicts with the second sentence? Well, that’s a good idea in general, but it won’t save you in this case, because the two sentences in this example don’t even share the same sub-component.

The first sentence says that DeAndre Hopkins is ‘one of the best’ WRs while the second sentence merely identifies DeAndre Hopkins as being good. The issue here is that in order to get software to write these sentences you would need to build the capability to have it both identify a ‘good’ WR and also rank order them and identify some subset that would be considered a grouping of ‘the best’. These are two different operations, so the system would not inherently see them as being the same thing.

This is an example of a conceptual repetition problem, where there are two events or stories identified by an NLG system that are different (including involving different calculations and a different ‘trigger’) but are conceptually similar enough that it doesn’t make sense to include them both in the same report.

Building a conceptual hierarchy is the first step towards solving this problem. If the two stories above share some parent concept then the system can begin to recognize them as being duplicative. However, it’s not quite that simple, as many stories could share a parent while still being able to coexist in a narrative. For example, ‘team on a winning streak’ and ‘team on a losing streak’ could both share a ‘streak story’ parent, and yet could make sense in the same article (“The win is the third in a row for Team A, while the loss is the fourth in a row for Team B”).

That brings us to another problem with repetition: dealing with different objects referenced by the stories. Going back to the DeAndre Hopkins story, it’s duplicative to mention that he is both ‘one the best WRs’ and also that he ‘is a good WR’, but it wouldn’t be duplicative to mention that some other WR is good. That said, if you were talking about 5 different players, it might start to get repetitive to mention over and over again that each of them was ‘one of the best’ at their position.

Therefore, the conceptual hierarchy needs to be able to recognize, for any given pair of sentences, the conceptual ‘distance’ that each item is from the other. It can take into account the nature of commonalities between the events in both stories and also look at factors such as whether they are being applied to different objects (which themselves would have a ‘conceptual distance’ between them, e.g. WR is closer to RB than WR is to Team) and also the inherent repetition factor of a given story. Typically, events that are more unique (such as a player scoring their highest total in a stat for the season) are more prone to repetition concerns than something like a team winning or losing a given game, which is bound to happen. In the example above talking about five different WRs, it would sound repetitive to talk about each of them achieving a recent season-high in a stat, even if they were different stats. If you were giving a synopsis about the recent performance of five teams however, it wouldn’t feel as duplicative to mention the won/loss result of each team’s recent game.

Another aspect of ‘distance’ that is important is the distance between each sentence in a narrative or sequence of narratives. A sentence might seem a bit duplicative following directly after a very similar sentence, but might not seem repetitive at all coming two paragraphs later. This is a big potential issue with reports that are in sequence with each other, such as a stock report that goes out every day. There are some things that make sense to mention in each report regardless of whether they appeared the day before, such as the market being up a lot. Other things, such as a given stock having really good analyst ratings, would be tedious if mentioned every single day.

Having balanced all the above complicated issues related to repetition, you run smack dab into another huge problem- what if you WANT something to be repetitious. For example:

The Golden State Warriors weren’t even playing the same game with the Timberwolves on Friday, getting trounced 132-98. Not only did they get blown out, but the loss knocked them out of the last guaranteed playoff spot.

I think this paragraph reads well. However, if you look at the last sentence, it is composed of two parts: (1) team got blown out, and (2) team out of the playoffs. The first part, ‘team got blown out’ was just mentioned in the previous sentence. Therefore, the narrative generation system has to take into account another factor, which registers how a particular piece of information is being used within an article and whether that precludes, or in fact invites, one or more mentions of that same piece of information.

So, we’ve established that good narrative generation software has to balance:

- The conceptual distance between the ‘events’ behind any two sentences

- The conceptual distance between any objects identified in those sentences

- The inherent repetitiousness of each event in the sentences

- The distance between the two sentences within a narrative (or sequence of narratives) and the effect of that distance

- Whether that repetition is even a problem at all or rather is the whole point of the structural arrangement.

Each of these factors are independent dimensions, so they must all be able to be balanced simultaneously.

The worst part is, when it’s done right absolutely nobody notices! When the software creates a paragraph that contains three related sentences that somehow don’t step on each other, we take it for granted, since human brains are so exceptionally tuned to understanding conceptual overlap that we don’t even consciously recognize avoiding repetition as ‘thinking’ at all.

That’s the bad news. The good news is that effectively dealing with repetition has given infoSentience’s technology a big leg up against the competition. It’s not something concrete we can point to, but rather it allows for higher quality, more insightful content to be built in the first place. And while difficult, embedding this intelligence into software allows us to do things that can’t be done by humans. For example, we can personalize repetition for each individual reader in a sequence of reports. Instead of automatically ‘repping out’ a story that appeared in the previous report, we can check to see if an individual read the previous report, and if not, simply skip any repetition issues presented by the previous report. That’s just one of the many ways that automated content can go beyond human capability once you’ve been able to mimic human conceptual thinking.